In the past article, we talk about installing MySQL and we mentioned the 3 main items needed: a configuration file, a storage and configuring the port.

Installing PostgreSQL is not different, it has the same requirement.

Custom Configuration:

While you may not need any special customization, it wont hurt to have the file in your disposable, you can always get the full config file docker and then customize it, so to get it run the command:

docker run -i --rm postgres cat /usr/share/postgresql/postgresql.conf.sample > my-postgres.conf

You can now customize the file, like for example, change the listening address to * to allow all the devices on your network to access it, like this:

listen_addresses = '*' # what IP address(es) to listen on;

# comma-separated list of addresses;

# defaults to 'localhost'; use '*' for all

# (change requires restart)

#port = 5432 # (change requires restart)

#max_connections = 100 # (change requires restart)

If you don't have access to docker right now and you would like to check what other options you can change have a look at this online file.

Storage Configuration:

There is a lot of options here, but you need to familiarize yourself with what K3s storage provides, remember K3s is a slimier version of K8s so not everything is available for you to use. Luckily for us, the local storage option is available.

So, what we will define is a local storage with read/write access permission and with 5GB of space, we can use the following to create it:

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: postgres-pvc

namespace: postgres-server

spec:

accessModes:

- ReadWriteOnce

storageClassName: local-path

resources:

requests:

storage: 5Gi

Port Configuration

We are not going to change the default port, but we need to configure out cluster to allow the access to this port, so we have it like this:

apiVersion: v1

kind: Service

metadata:

name: postgres

namespace: postgres-server

spec:

selector:

app: postgres

type: LoadBalancer

ports:

- name: postgres-port

protocol: TCP

port: 5432

targetPort: 5432

Some of these topics may not be 100% clear to me, so I might also fail to explain, so if you have better way to explain it or know what I am doing wrong, please let me know.

Deployment

Now that we have the most basic information we need, we gather everything in two files, one big yml file and a small .conf file.

Lets call our yaml file postgres.yml and inside of it lets add the following:

---

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: postgres-pvc

namespace: postgres-server

spec:

accessModes:

- ReadWriteOnce

storageClassName: local-path

resources:

requests:

storage: 5Gi

---

apiVersion: v1

kind: Service

metadata:

name: postgres

namespace: postgres-server

spec:

selector:

app: postgres

type: LoadBalancer

ports:

- name: postgres-port

protocol: TCP

port: 5432

targetPort: 5432

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: postgres

namespace: postgres-server

spec:

replicas: 1

selector:

matchLabels:

app: postgres

template:

metadata:

labels:

app: postgres

name: postgres

spec:

nodeSelector:

kubernetes.io/hostname: worker-1

containers:

- name: postgres

image: ghcr.io/zaherg/postgres:latest

imagePullPolicy: Always

ports:

- name: postgres

containerPort: 5432

volumeMounts:

- name: postgres-storage

mountPath: /var/lib/postgresql/data

- name: postgres-config-volume

mountPath: /etc/postgresql/postgresql.conf

subPath: postgresql.conf

env:

- name: POSTGRES_PASSWORD

value: "secret"

- name: POSTGRES_USER

value: "postgres"

- name: POSTGRES_HOST_AUTH_METHOD

value: "trust"

volumes:

- name: postgres-storage

persistentVolumeClaim:

claimName: postgres-pvc

- name: postgres-config-volume

configMap:

name: postgres-config

items:

- key: main-config

path: postgresql.conf

I am using my own docker image which I have built based on latest version of ubuntu postgres image, the reason is that in my old experience you have to edit the pg_hba.conf file to make sure every device can access postgresql, it may not be needed any more, you can always check the official docker docs here about why I need to edit it, but for me I did that to give my development devices access to my RPI cluster, as I nowadays I use that cluster as part of my development setup.

Now that we have everything, we start executing the commands, but remember these commands should be run from within your Master PI.

First we need to create the namespace for our service, as I like to separate everything using the namespace, we do that by executing the command

kubectl create namespace postgres-server

Second, we create something called the configuration map, which will holds our PostgreSQL custom configuration

kubectl create configmap postgres-config --from-file=main-config=my-postgres.conf -n postgres-server

Lastly, we need to create the storage, the service and the deployment, luckily we have stored those all inside our postgres.yml file we can run the following command to create them at once:

kubectl apply -f postgres.yml

To check that everything is running we can run the following command:

kubectl get svc -n postgres-server

If everything was okay, you will get a result similar to this one:

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE postgres LoadBalancer 10.43.97.220 192.168.68.110,192.168.68.111,192.168.68.112 5432:32688/TCP 20d

I have a small github repo which contains all the code if you like to check, you can find it here.

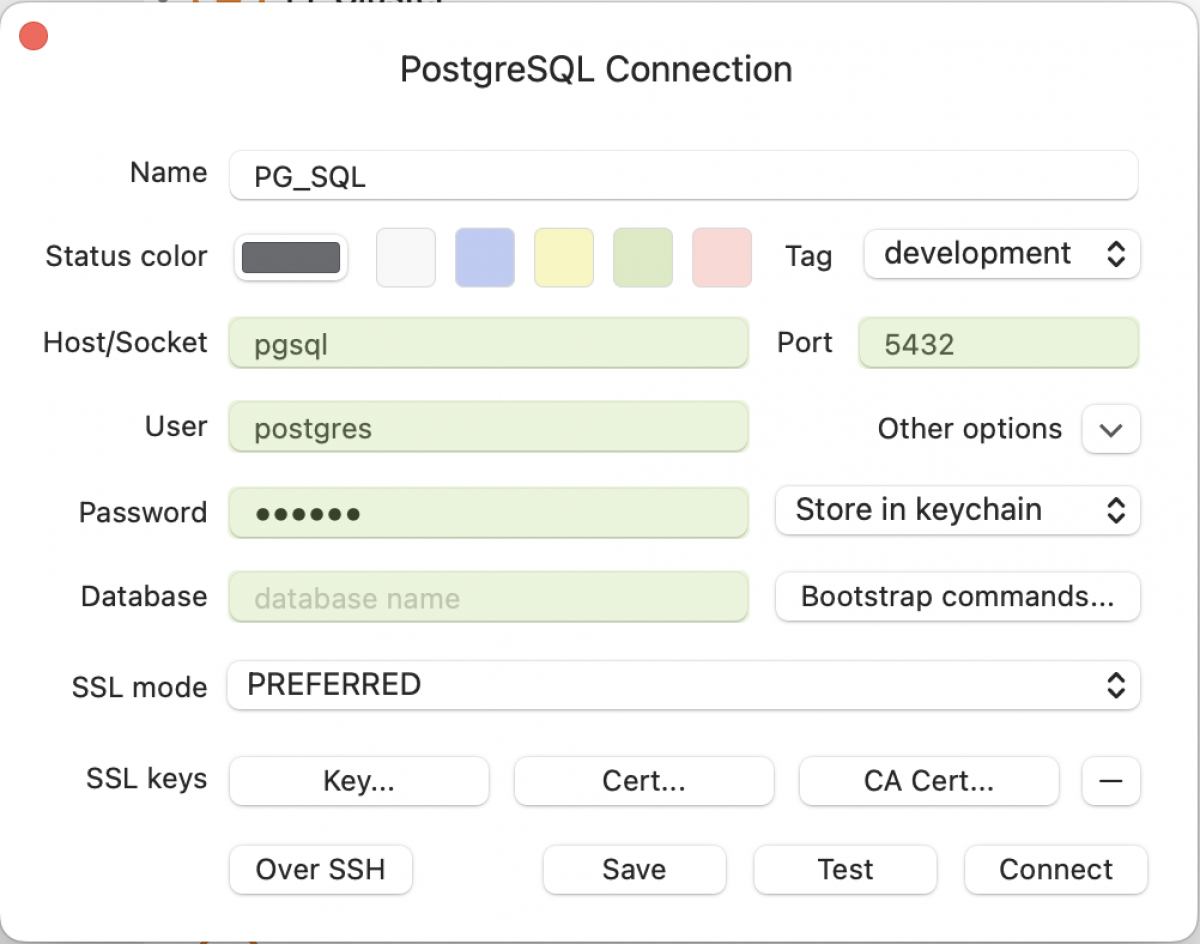

Now, you can connect to it via any apps using the following info (password is "secret").

Remember to change the host to the IP of your RPI, as for me I have the IP aliased to the service name postgres in my /etc/hosts file.

Next step we will discuss how to automatically backup our PostgreSQL databases once every day (or more).